Introduction

There is a great deal of excitement around AI agents right now, and for good reason. The idea is attractive: a system that not only answers, but can carry work through across several steps by searching, comparing, summarising, updating, and notifying.

The problem begins when the agent is treated not as an enterprise component, but as a general-purpose magic tool.

What is an agent really?

Put simply, it is an AI-supported process component that can perform several steps independently in pursuit of a goal.

That can be useful, but it is important to see that an agent:

- calls tools,

- accesses data,

- follows decision rules,

- and in some cases creates external effects.

So an agent is not just a “smarter chatbot”. It is an automated execution element.

Where do agents create real value?

Internal research and synthesis work

For example, assembling a summary from multiple sources.

Operational preparation

Categorising tickets, gathering background information, drafting an initial status report.

Standardisable workflows

Situations where the steps are at least somewhat predictable.

Where are they dangerous?

When the goal is vague

If the agent gets instructions that are too general, it can easily drift off target.

When access is too broad

A powerful agent with poor permissions is a bigger risk than a simple chat interface.

Without output control

If the agent writes directly into systems without human review, mistakes scale quickly.

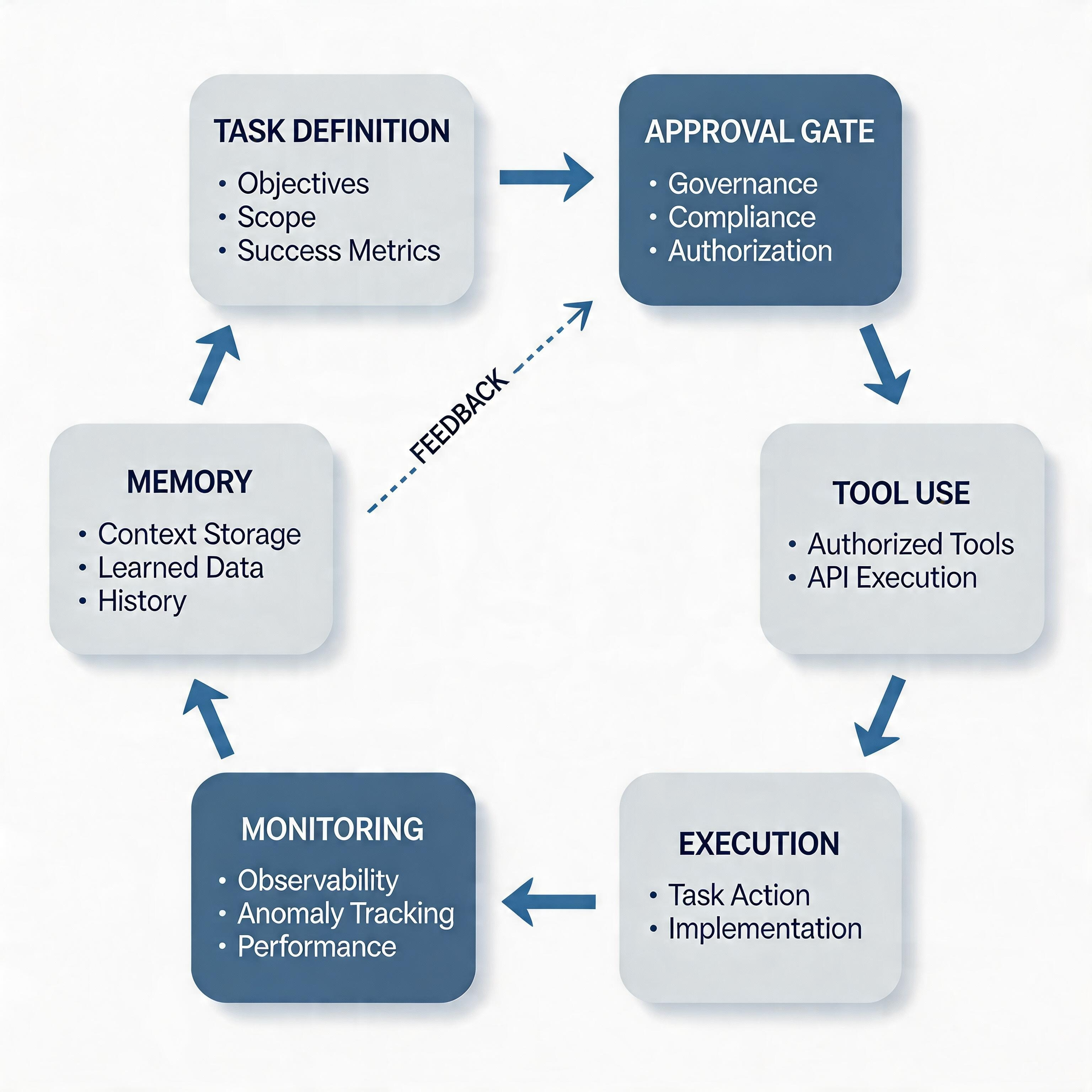

Four control points for agents

1. Narrow scope

A clearly defined goal and toolset.

2. Access boundaries

Follow the principle of least privilege.

3. Observability

It should be visible which steps the agent actually took.

4. Human override

It must be possible to stop, override, or roll back the agent.

A better starting pattern

The first agent should not be fully autonomous. A much better starting pattern is a semi-autonomous assistant:

- it prepares work on its own,

- makes suggestions,

- stops before execution,

- and asks for human approval.

This is also commercially stronger, because the organisation learns how to work with agents while keeping risk proportionate.

Closing

There is real potential in agents. But in a mature enterprise environment the goal is not to make them as autonomous as possible. The goal is to make them exactly as autonomous as the process and the risk level allow.